April 2, 2026

“Skills are all you need”

… and here’s how to build them

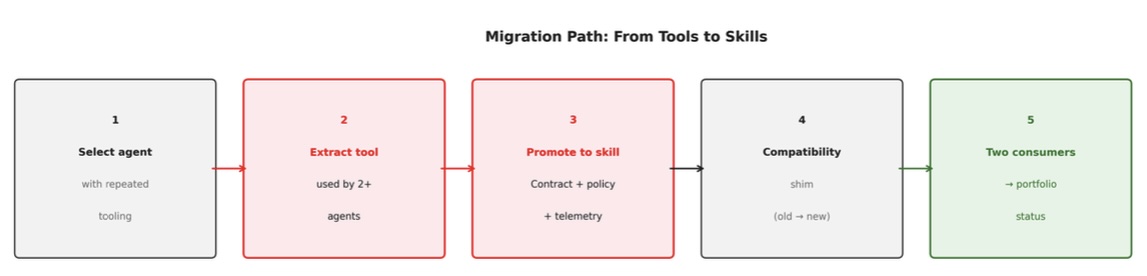

Enterprise AI is accumulating debt faster than most organisations realise. The debt is not in the models – it’s in the plumbing around them. Teams build agents, wire in tools, and ship capabilities that only one agent can call, with no policy enforcement, no telemetry, and no owner. When the model changes or the agent is retired, the tool disappears with it. Nothing was reusable because nothing was designed to be.

Our CAAI whitepaper Skills are all you need addressed this directly. Our argument is simple: the right unit of execution in an enterprise agent ecosystem is going to the skill; a governed, callable capability with a stable contract. Not a prompt. Not a loosely coupled tool. A skill, built with the same discipline as any production service. The high-level approach was published earlier this week.

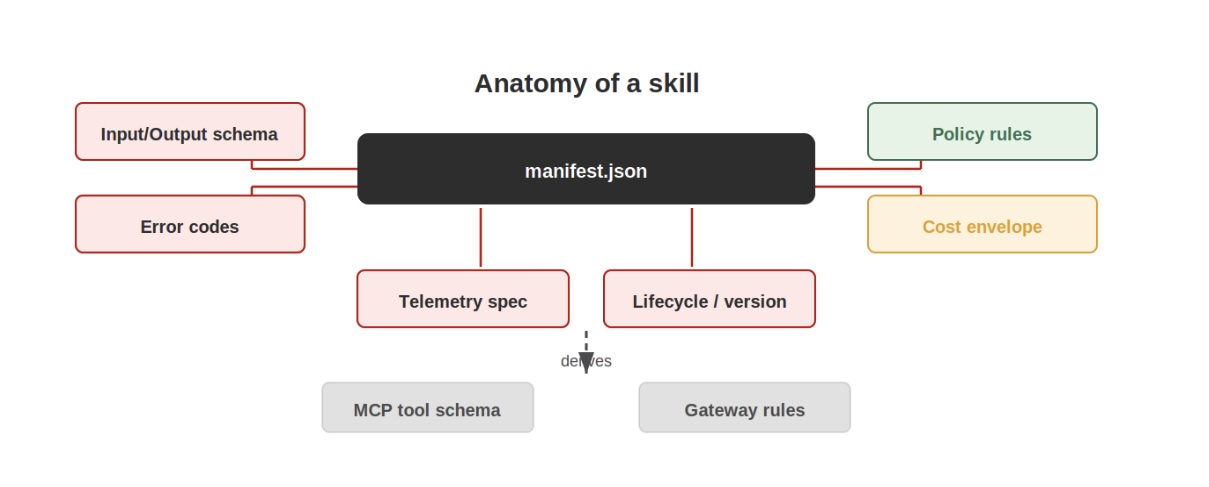

The contract is the product

Every other decision in skill engineering flows from one place: the manifest. The manifest defines what the skill accepts, what it returns, how it fails, what policy it requires, and what it costs per invocation. It is machine-readable, versioned, and the source of truth for MCP tool schemas, gateway validation rules, and catalogue metadata.

A well-formed manifest includes typed input and output schemas, a catalogue of stable error codes mapped to HTTP status equivalents, data classification requirements, required OAuth scopes, and a cost envelope declaring p50, p95, and maximum cost per invocation.

That last element deserves attention. Attaching cost to the contract makes it a first-class property of the skill. If bounded cost cannot be guaranteed, because the skill calls a model with unbounded output, for instance; the skill should be flagged as experimental until that is resolved. Agent systems appear to have zero marginal cost until a bill arrives. The cost envelope exists to prevent that surprise.

Six principles that are not optional

Our Developer Guide whitepaper sets out six engineering principles and is explicit that they are the minimum conditions for operating a skill in a regulated enterprise environment, not aspirational targets.

Every skill has explicit timeouts, retry budgets, and compensation paths. If it cannot complete within its budget, it fails with a stable error code. Business rules belong in code and policy files, not in prompts. A skill may use a language model internally for inference or summarisation, but any action that changes enterprise state is governed by deterministic logic. Credentials are scoped and short-lived, issued by the gateway per invocation. Agents never hold operational secrets. And if a skill cannot be instrumented, it is not production-ready.

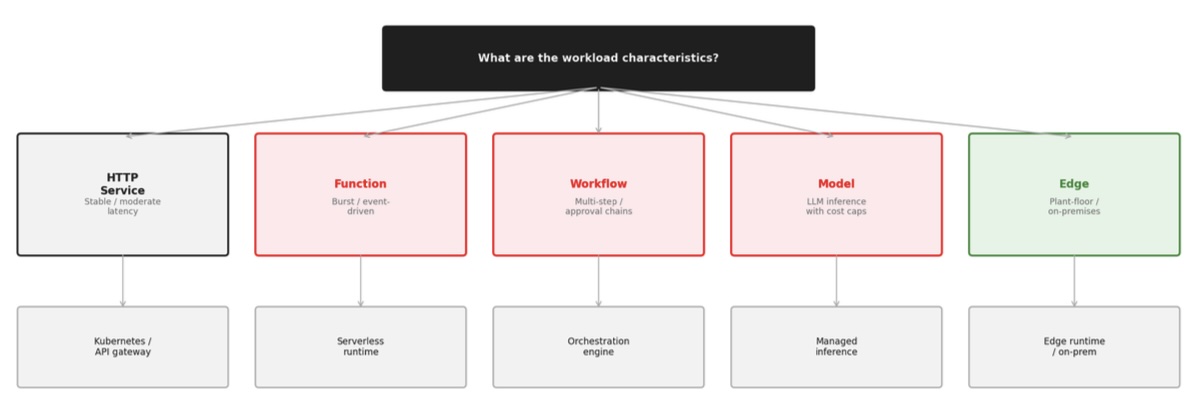

Five runtime patterns

Not every skill runs the same way. The right execution substrate depends on latency requirements, governance constraints, and operational trade-offs.

HTTP service skills suit stable integrations with moderate latency; long-lived processes behind Kubernetes or an API gateway with predictable scaling. Function skills suit burst workloads and event-driven processing, trading cold-start latency for elastic scaling. Workflow skills handle multi-step enterprise processes where long-running state and compensation paths justify a dedicated orchestrator. Model skills wrap inference endpoints with token budgets, output schema validation, and cost caps. And edge skills run on plant-floor or on-premises infrastructure where cloud round-trips are not acceptable, introducing specific concerns: local secrets handling, offline audit buffering, signed artefacts with staged rollouts.

Choose the substrate to fit the workload. Forcing everything through a serverless function because it is convenient is the kind of decision that becomes painful at scale.

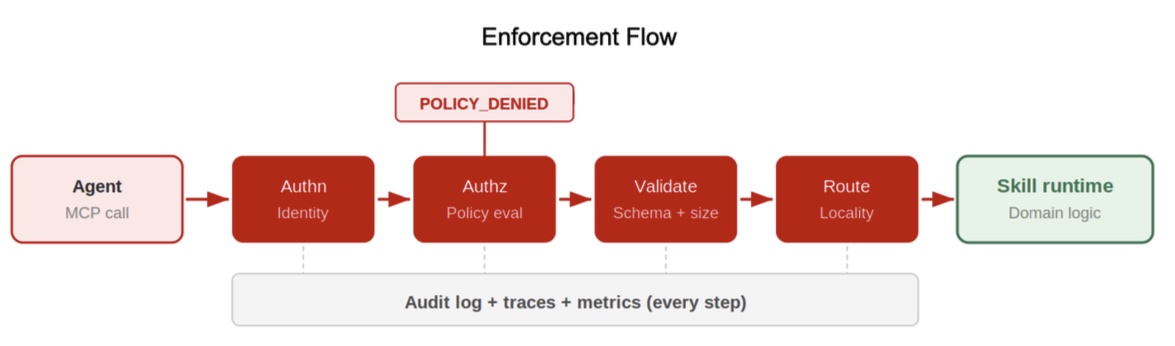

The gateway is the enforcement boundary

Gateway concerns must not be pushed into individual skills. Authentication, authorisation, policy evaluation, quota enforcement, request validation, routing, trace context propagation, and audit logging all belong to the gateway. When those concerns are distributed across individual skills, platform-level assertions about control become impossible.

The practical result is simpler skill implementations. No credential management. No access policy evaluation. No cross-cutting concerns. Skills focus on domain behaviour; the gateway handles everything else. A denied request returns a stable error code without reaching the skill runtime at all.

This is not a minor implementation detail. It is the architectural decision that determines whether a skill platform is governable or not.

Policy as code, co-located with the skill

Policy evaluation should be automated, testable, and versioned alongside the skill. The whitepaper recommends Open Policy Agent with Rego, though any engine supporting declarative rules and test fixtures works.

The key rule: default deny. Skills must be explicitly permitted, not permitted by omission. And policy rules belong in the skill repository, not a central policy store. Centralising policy creates a coupling point – every skill change requires a separate policy review cycle in a repository the skill team does not own. Co-location means policy changes are tested in the same CI run as the implementation change.

Composition requires explicit dependency declarations

Skills will invoke other skills. A predictive maintenance workflow might call asset identity resolution, anomaly detection, failure forecasting, and work-order creation in sequence. Composition is where the architecture proves itself or collapses under cascading failures and hidden coupling.

All inter-skill calls route through the gateway. Dependencies must be explicit in the manifest – which upstream skills are invoked, what privilege boundaries are crossed, and what the failure semantics are.

Transaction semantics in skill chains are almost always compensating rather than ACID. Design write skills with idempotency keys bound to the invocation, bounded retry budgets, and a named compensation action. And declare circuit breaker thresholds in the manifest: a skill that hangs degrades every caller.

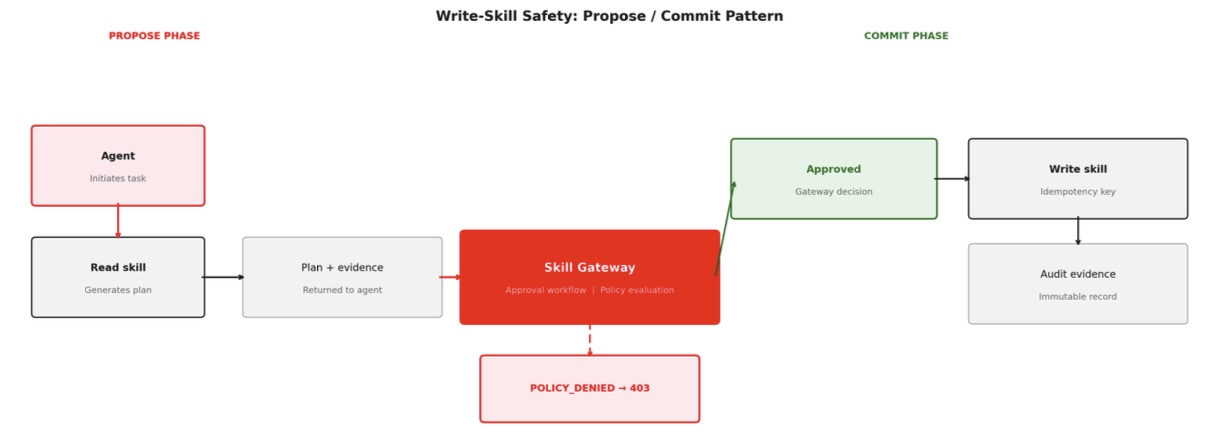

Write skills need a propose/commit split

Write skills change enterprise state, which demands stronger controls than read skills. The most effective default is to split every write operation into two phases.

In the propose phase, the agent calls a read-only skill that generates a plan. The gateway then enforces an approval workflow. The commit skill performs bounded changes using idempotency keys, honours change windows, and writes immutable audit evidence.

Idempotency keys must be bound to the invocation ID, not generated by the skill. The gateway issues invocation IDs; using them as idempotency keys means duplicate calls from retrying agents are automatically detected and deduplicated without the skill maintaining its own state.

Observability is not optional

Every invocation should produce a trace span, structured metrics, and a log envelope. Instrument with OpenTelemetry to preserve trace context across skill composition chains. The minimum telemetry per invocation: a trace ID linking to the broader orchestration context, an invocation ID unique to this call, skill name and version, outcome, latency, and cost attribution tags.

Cost attribution connects skill execution to business unit budgets. Tag every invocation with at minimum: skill name, version, caller identity, and business unit. Make cost variance visible in the same dashboard cycle as reliability – not after the monthly invoice.

The anti-patterns to avoid now

We identify four that recur consistently in early skill-centric implementations.

Embedding business logic in prompts without version control or tests creates rules that break silently when model behaviour changes. Passing credentials through agent context exposes secrets to injection and logging. Accepting unbounded payloads without size limits lets sensitive inputs reach backends unfiltered. And publishing skills with no registered owner or telemetry creates orphaned capabilities that degrade without anyone noticing.

All four are easy to introduce and expensive to fix at scale.

What this means for teams building today

The engineering discipline required with contract design, schema validation, policy-as-code, instrumentation, lifecycle management, is the same discipline that made SOA and microservices operationally trustworthy. What is different is the context. These capabilities are invoked by probabilistic reasoning engines in non-deterministic sequences. That makes the discipline more important, not less.

Build the contract first. Make the gateway the enforcement boundary. Instrument everything. Keep write skills behind propose/commit. Treat the catalogue as a product.

The full developer guide: covering MCP integration, CI/CD pipeline design, state and memory taxonomy, marketplace publishing, and the complete developer checklist – is available from the Centre for Architecture & AI at Hitachi Digital Services.