May 11, 2026

AI pricing is changing and execution is the new unit of work

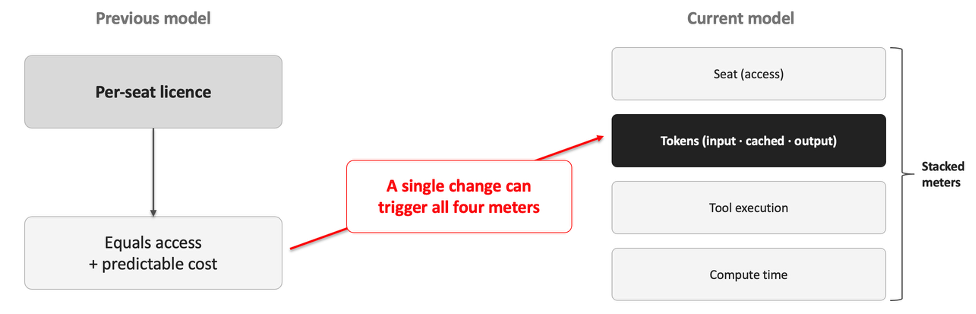

Where licenses once governed who could use a tool, meters now govern how much that tool runs. Frontier models, coding agents and hyperscalers each charge through some combination of seats, tokens, pooled credits and compute time. Credits are an internal accounting convenience. They are not a portable currency, and comparing vendors on credit price alone is misleading. A single change request can now trigger seat access, token consumption, tool invocation and underlying compute. Cost management is therefore a system-design problem as well as a contracting problem. Governance has to follow the meter, not the seat.

Executive implication

The same development team can ship the same work at the same quality level and still face a substantially larger bill if routing, context and autonomous execution are unmanaged.

From access pricing to execution pricing

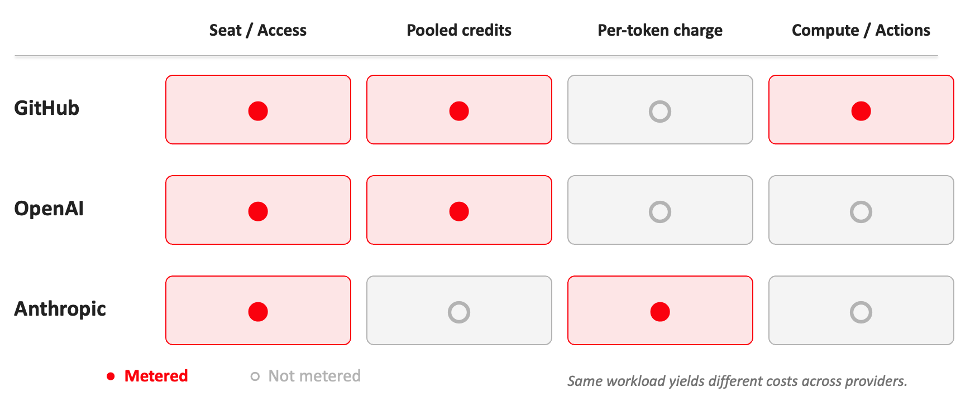

GitHub, OpenAI and Anthropic reflect the broader direction of the market. Seat access still matters, but it no longer explains cost. The commercial centre of gravity is moving towards usage: tokens, premium requests, compute, cached inputs and task execution.

The result is a fragmented market in which pricing structure is as important as model capability. A workload that suits a direct per-token model on cost grounds may suit a pooled-credit model on operational grounds.

This shift makes execution measurable at fine granularity. Context size, model selection and workflow design now have cost consequences. They also have quality consequences, since the same architectural decisions that drive cost also drive latency and output reliability.

Vendors now combine access and usage in different ways. These differences are commercial and operational: they affect which workloads each provider is economical for, and they affect how much cost forecasting is possible before commitments are signed.

From cloud spend to reasoning spend

So, vendors now combine access and usage in different ways;

-

- Cloud FinOps is now focused on compute utilisation, storage growth, network egress, reserved instances, VM sprawl and cost per workload.

- Agentic FinOps applies the same discipline to token and reasoning utilisation, context growth, tool-call and orchestration overhead, governed reasoning capacity, agent and workflow sprawl, and cost per outcome.

The shift matters because execution is no longer measured only in infrastructure consumption. It is now measured in reasoning activity, model selection, orchestration depth and autonomous behaviour. Finance, procurement and engineering teams therefore need workload-level controls over:

-

- Token and reasoning utilisation: Tracking spend where work is actually executed.

- Context growth: Control prompt, repository and retrieval scope.

- Routing policy: Match model choice to risk, value and task type.

- Cost per outcome: Connect spend to tickets, changes, quality and risk.

Hitachi Digital Services approach

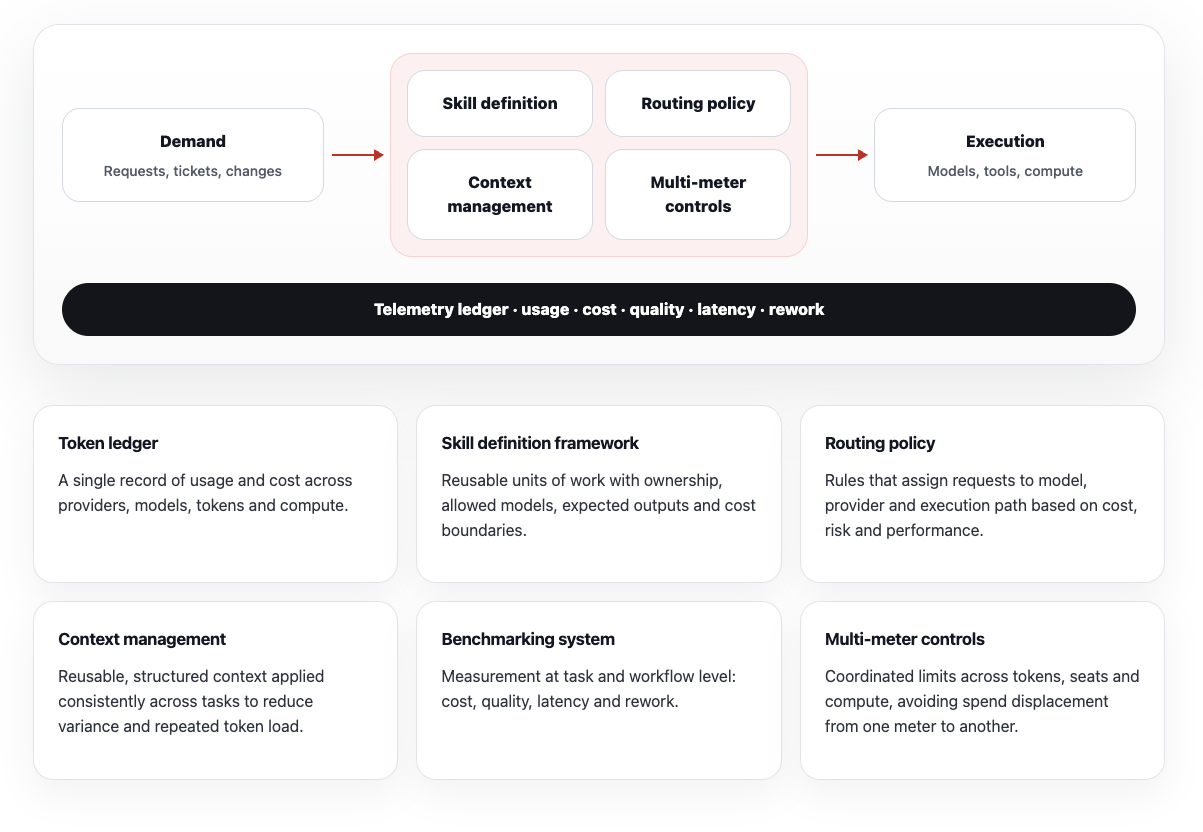

A control model for token-based execution

A token-based environment needs governance between work requests and execution targets, with telemetry returning to a central ledger. The model below turns ad hoc execution into measurable, reusable and policy-driven work.

Context is an economic asset

Reusable, well-structured context reduces token consumption and improves consistency. Poorly managed context increases both cost and variance, often increasing time to a usable answer.

Organisations should manage context with ownership, version control and reuse policies. Context packs, standards, patterns and known constraints should not be assembled repeatedly inside individual workflows.

Recomputing the same inputs increases spend without producing new value.

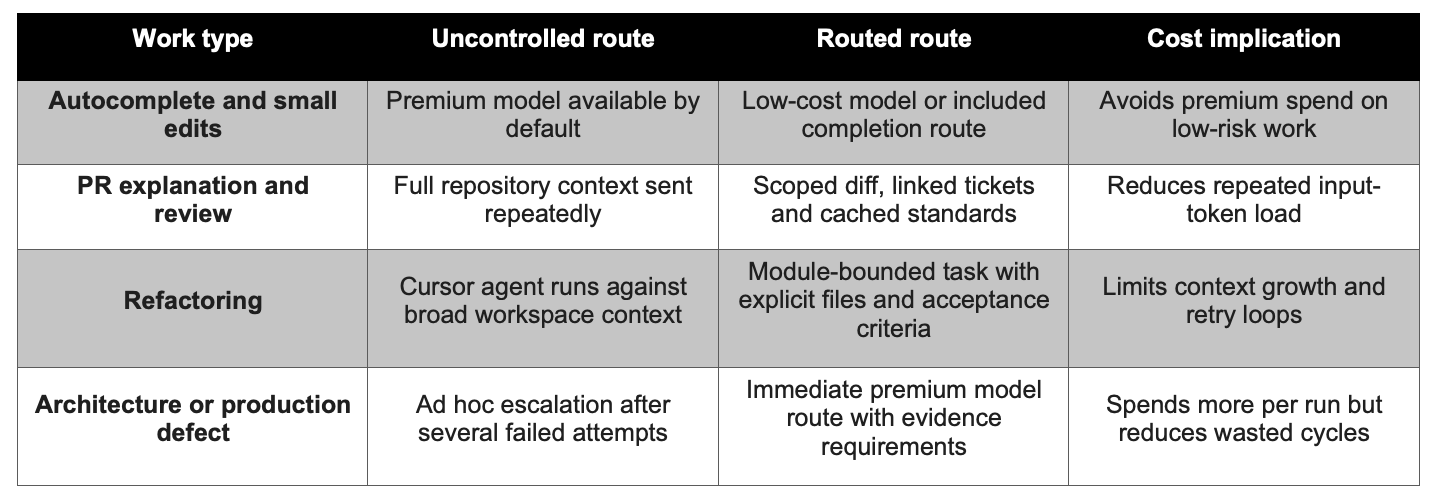

Consider a hypothetical product squad using GitHub Copilot for IDE assistance and pull-request review, and Cursor for deeper agentic development.

Without routing policy, the team may default to premium reasoning models for routine edits, keep full repository context open, allow long-lived sessions to accumulate history, and let agents retry or replan without a spend boundary.

With routing policy, the same squad can use lightweight models for autocomplete and small edits, reserve premium models for architectural decisions or difficult debugging, scope Cursor context to the relevant module, and require human checkpoints before expensive multi-step execution.

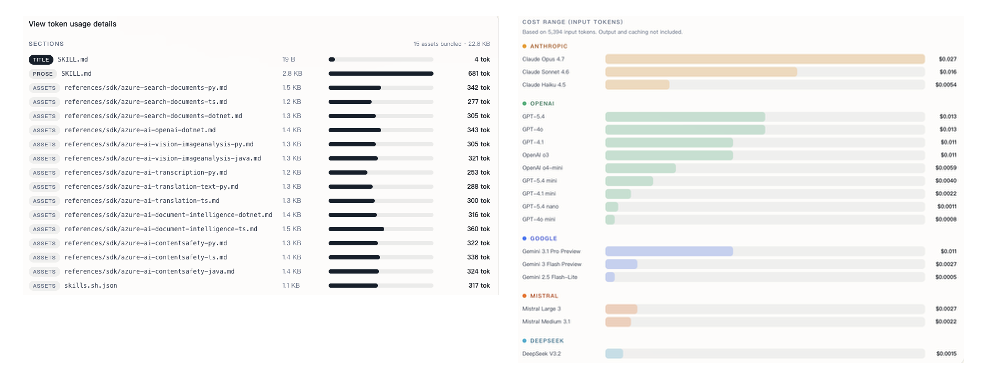

Context affects both quality and cost. Reusable, well-structured context reduces token consumption and improves consistency. Poorly managed context increases both cost and variance, and frequently increases time to a usable answer. Vendors already support this through prompt caching and reusable skill systems. Organisations that manage context with ownership, version control and reuse policies should see lower cost per task and more predictable outcomes than those that allow context to be assembled inside individual workflows. For example, Hitachi Digital Services tracks how much tokens agentic skills consume across different models:

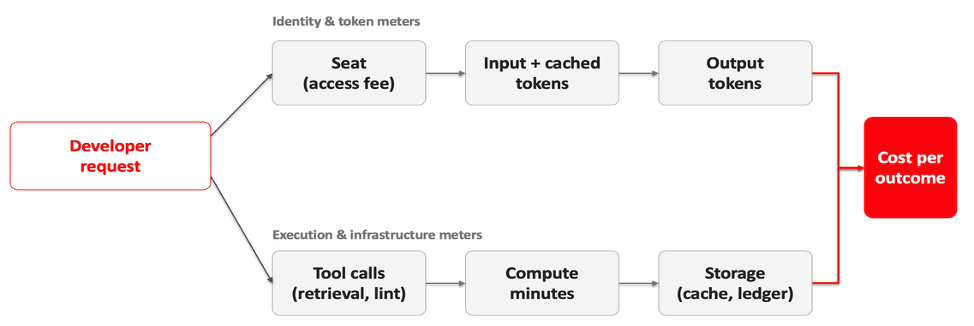

How cost accumulates across meters

Cost depends on three variables: token pricing, included usage, and workload shape. The same task can differ in cost by an order of magnitude depending on model selection and context handling. Caching and reuse materially affect cost. Recomputing the same inputs increases spend without producing new value.

Procurement questions to ask before commitment

-

- How are tokens, caching, tools and compute priced?

- What happens when models are substituted, deprecated or repriced?

- Do credits expire, roll over or pool across workspaces?

- Can usage and audit data support chargeback and AI AgenticOps reporting?

- Where are the responsibility boundaries when workloads span providers?

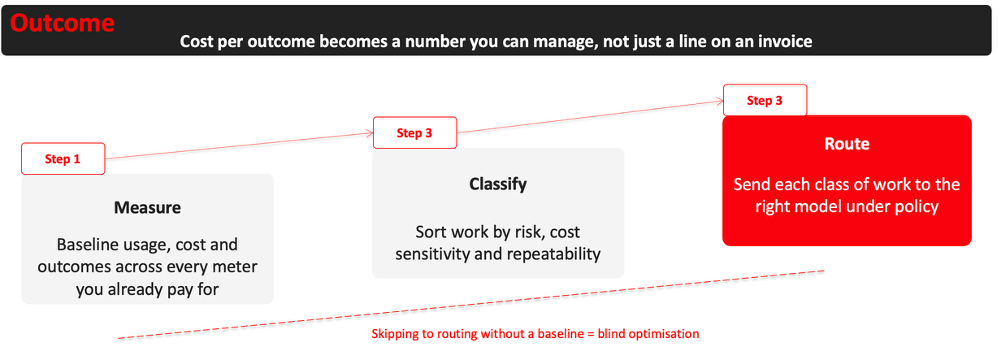

Measure first. Classify second. Route third.

The near-term risk is not that agentic coding tools fail to deliver value. The near-term risk is that they deliver value through unmanaged consumption.

Leaders should be able to answer five questions: what is being metered, how work is routed, who owns the context, what each outcome costs, and whether the spend improves quality, speed or risk reduction.